As a hobby outside of Ankota, I teach courses about technology at Babson College, which is a business school. My fear and motivation is that business leaders (and home care agency leaders) will relegate technology matters to geeks like me and not make the effort to keep themselves up-to-date with what's going on in the tech world.

In a previous era, this wouldn't have been a problem, but living in a world with companies like Uber and Netflix, we have to realize that doing things the way they've always been done is unlikely to cut it as we move towards the future.

I've added this additional preamble to this post because I'd imagine that most home care agency managers are likely to consider all matters related to artificial intelligence (AI) to be beyond their grasp. If you've read this far, give me a chance to make the case that AI is becoming more and more mainstream and something that will ultimately affect home care.

To begin, realize that the Netflix recommendations for what you might want to watch next, the Amazon Echo (Alexa) or Siri's ability to listen to your questions and often give you a good answer, the Amazon.com "you might also like ____" recommendations, or the ability of a Bank of America ATM to read a hand-written check and know how much it was made out for are everyday examples of AI.

Machine Learning (ML) vs Deep Learning (DL)

If you're still reading then I'm pretty impressed, but let's explore further...

Machine learning models are a form of AI that try to apply a mathematical formula to predict an outcome. These models are "trained" with real life data where the answer is "labeled." Let's take the example of loan application risk calculation. You can start building a model for this by looking at a mortgage company's loan history. If you had an excel spreadsheet that had one row per loan, with columns like loan amount, applicant demographics, applicant credit score, applicant income, applicant savings, and whether the loan was defaulted or not you and imagine that a mathematical model could be constructed to make a pretty good prediction of whether a new loan application should be approved or not. Someone good at math could likely figure out the formula, and now there are computer programs that can figure out the formula for you. The ability to figure out the formula and apply it is called a machine learning model and this is the technology used for the examples given above.

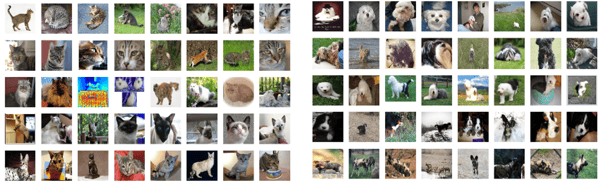

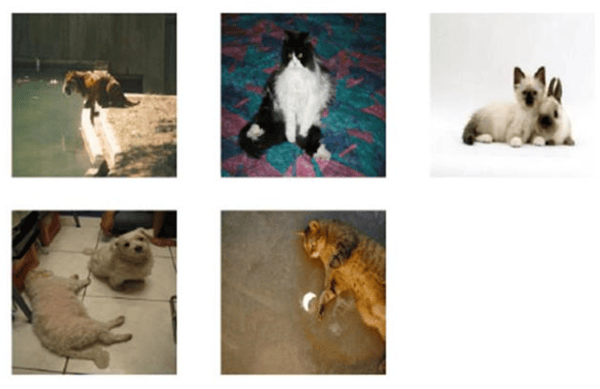

So what is "Deep Leaning?" The result of deep learning is similar to machine learning but the way it gets there is different. In the machine learning examples above there are certain "tell tale factors" that play a big role in the model. For example, someone with a credit score over 700 and $50K in the bank in unlikely to default on a $15K car loan. Choosing the parameters and their impact (in this case credit score and savings) is called "feature extraction" and machine learning models are based on feature extraction. Deep learning is different and tries to use a neural network like the human brain. In the brain we have a whole bunch of receptors (sight, heat, touch, smell, taste, sound, words, numbers, music, etc.) that "fire" when stimulated and the result of that is our reaction (fear, defense, joy, wonder, delight, etc.). We don't exactly know how it works but we've all observed a 2 year old say "doggie" or "kitty" and be right, even when they may have only seen a handful of dogs or cats in their life. Deep learning works in a similar manner where the answers are good but you don't necessarily know why.

Cats vs. Dogs

In fact, "cats vs dogs" was one of the early deep learning experiments (2015).

A DL model was trained with 13,000 images labeled as cats or dogs and then it was tested with 500 images that it hadn't seen before. It predicted 485 of them (97%) with high confidence, 10 (2%) with moderate confidence and get 5 (1%) wrong. The ones that it got wrong are shown below:

How Could This Possibly Effect Home Care?

We produce a lot of data in home care, most notably claims data. But historically claims data has really looks only at a patient/client ID, a procedure code and a number of units. In the new era of Electronic Visit Verification (EVV), much more data is provided including caregiver, start time, end time and location information (via GPS or caller ID).

If Netflix can predict what shows and movies you'll likely enjoy, it's not a big leap to believe that the receivers of your EVV data will be able to predict which home care agencies are using EVV or creating fraudulent records. This is only the beginning:

.png)

.png)

.png)

.png)